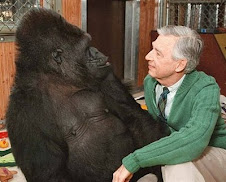

“Dogs are a very important part…” https://www.threads.com/@nprscottsimon/post/DYXJaSAibyP?xmt=AQG0O2KB81VYNV6qh1QFy6RRHxTU_seFn1kv06FijwxiHKWuPFo4svJhvdkF-kb9JR_fUOwM&slof=1

Saturday, May 16, 2026

Wednesday, May 13, 2026

The Nobel-Winning Psychologist Who Believed He Found the Secret to Happiness

Colbert

https://www.garrisonkeillor.com/radio/the-writers-almanac-for-wednesday-may-13-2026/

Monday, May 11, 2026

Davy discovers nitrous

“Finally, he arrived at the most propitious of the gases: nitrous oxide. He arrived at it by chance, while experimenting with nitrogen—“ perfectly respirable when pure”—which induced strange effects as soon as it bonded with oxygen. “I made a discovery yesterday which proves how necessary it is to repeat experiments,” he wrote to his closest friend back home on April 10, 1799, then added: This gas raised my pulse upwards of twenty strokes, made me dance about the laboratory as a madman, and has kept my spirits in a glow ever since. The discovery would soon confer upon nitrous oxide the nickname “laughing gas” and upon Davy the status of international celebrity.” — Traversal by Maria Popova https://a.co/09ytBSfg

Wednesday, May 6, 2026

Tuesday, May 5, 2026

Win a victory

https://www.garrisonkeillor.com/radio/the-writers-almanac-for-monday-may-4-2026/

Monday, May 4, 2026

Saturday, May 2, 2026

Walk

Walk: Rediscover the Most Natural Way to Boost Your Health and Longevity―One Step at a Time https://a.co/d/08o3aTF4

every day

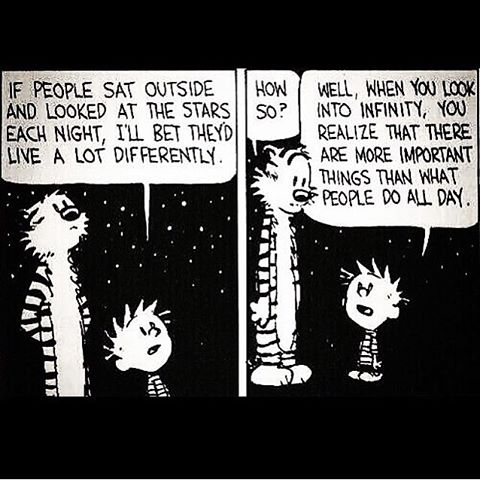

“One ought, every day at least, to hear a little song, read a good poem, see a fine picture, and, if it were possible, to speak a few reasonable words.” — Johann Wolfgang von Goethe

Friday, May 1, 2026

Vitalism

...

https://www.nytimes.com/2026/05/01/opinion/donald-trump-animal-spirits.html?smid=em-share

Charles Darwin (

Charles Darwin (